One of the hardest parts of making music is not writing, arranging, or editing. It is deciding whether an idea deserves those efforts in the first place. A chorus might sound powerful in imagination but flat in execution. A lyric may read well on the page but lose energy once sung. That is where an AI Song Generator becomes relevant: it lets people test a song idea before they commit serious time to building it.

That is a subtle but important role. Many creative tools are evaluated as if they must deliver final perfection immediately. In reality, the more useful question is often whether they help people make better decisions earlier. A platform that can turn a rough concept into a listenable version quickly may save hours of effort on ideas that were never going to work, while also helping stronger ideas reveal themselves sooner.

Why Early Validation Matters In Music

A song idea is fragile in its early stage. It often exists only as language, memory, rhythm, or a vague emotional picture. Until it becomes audio, it is difficult to judge accurately. People tend to overestimate ideas they have not heard, and underestimate ideas they have not explored.

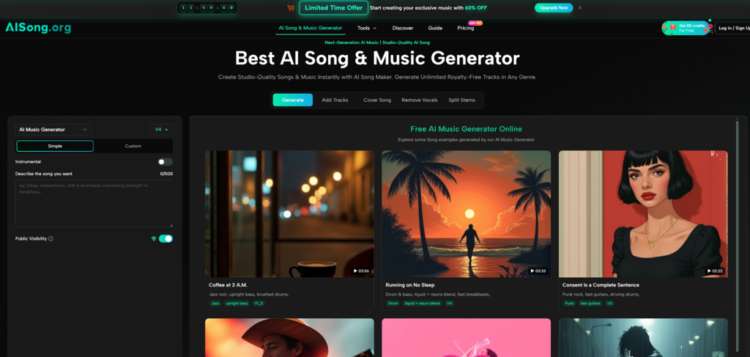

AISong appears built around solving that early validation problem. Based on its public workflow, the platform gives users a way to move from concept to sound through a sequence that is simple enough for non-specialists but still structured enough to feel intentional. It is not only about making songs. It is about helping people hear whether they are moving in the right direction.

Hearing An Idea Changes How You Judge It

Once a concept becomes a track, even a rough one, several things become clearer very quickly. You can hear whether the emotional tone matches the words. You can hear whether the pacing drags. You can hear whether a style choice feels natural or forced. Those insights are difficult to access when the idea remains abstract.

That is why generation speed matters. It is not just a convenience feature. It changes when evaluation becomes possible.

A Tool For Choosing, Not Just Producing

In my observation, strong creative tools often improve judgment more than they improve raw output. AISong seems aligned with that pattern. By offering multiple creation paths, model options, and refinement tools, it gives users ways to compare alternatives instead of accepting a single answer as final.

How The Platform Supports Early Testing

AISong’s public guide suggests a workflow that is relatively straightforward, which makes sense for a product designed to help people move from uncertainty to clarity.

Step 1: Define The Song Idea

The process begins with either a plain-language description or a lyric-led setup. A user can choose a simple mode for direct prompting or a custom mode for more structured control.

Why This First Decision Is Important

The choice between prompt-first and lyric-first affects the kind of testing a user wants to do. Prompt-first creation is useful for checking broad direction, such as genre, mood, or energy. Lyric-first creation is more useful when the user already knows the message and wants to test how it behaves musically.

Step 2: Choose The Generation Model

The public guide describes multiple model versions, each with different trade-offs. That structure is useful because not every evaluation scenario needs maximum quality.

Why Different Models Help Different Tests

If the goal is fast idea checking, a lighter or cheaper model may be enough. If the goal is hearing how a more polished version behaves, a stronger model may be the better choice. That distinction makes the platform more practical because it reflects different creative intentions rather than forcing one all-purpose workflow.

Step 3: Tune The Output Direction

AI Music Generator also includes settings related to vocal choice and style adherence, among others. These controls give the user some influence over how rigidly the song follows the original instruction.

Why Small Directional Controls Improve Testing

A good test needs the right conditions. If a creator wants to know whether a soft acoustic idea works, an overly experimental result may not answer that question. Basic controls help the user test the intended direction more fairly.

Step 4: Listen, Compare, And Continue

Once the song is generated, the process can continue through regeneration and additional tools. That is essential because early validation rarely happens from a single version.

Why Comparison Usually Beats One-Shot Output

In my experience with generative tools, the first result is often less important than the pattern across several results. If two or three versions all fail in the same way, the issue may be the concept itself. If one version suddenly works, the idea may be stronger than it first appeared. AISong’s regenerate-and-refine logic seems well suited to that kind of comparison.

Why The Supporting Tools Matter

AISong becomes more interesting when viewed not only as a generator but as a testing environment. Its surrounding features make that interpretation stronger.

Lyrics Assistance Helps Evaluate Message Fit

For users who have a theme but not finished lyrics, the built-in lyric support makes the testing process faster. Instead of pausing the workflow to write everything manually, they can generate a structured lyrical starting point and hear how it functions in song form.

That does not remove the need for editing. But it does make it easier to evaluate whether the emotional premise is promising enough to refine.

Vocal And Stem Separation Aid Analysis

The platform’s vocal remover and stem splitter tools are practical because they support listening in parts rather than only in full mix form. A creator may want to hear the instrumental alone, isolate the vocal component, or inspect how the arrangement behaves when separated into stems.

Why Partial Listening Improves Decision-Making

Sometimes a song works because the instrumental carries it. Sometimes the vocal phrasing is the weak point. Separation tools help users identify where the strength or weakness actually sits. That can make revision more targeted.

Add Tracks Helps Test Hybrid Ideas

AISong also appears to support workflows where users add vocals to existing instrumental material or add instrumentation to vocal ideas. This matters because many music concepts are incomplete but still worth testing.

A producer might have a beat without a topline. A songwriter might have words without an arrangement. A tool that helps merge those fragments can accelerate validation without demanding a full studio setup.

Where AISong Creates Practical Value

| Testing Scenario | AISong Approach | Likely Benefit |

| Checking whether an idea works at all | Fast prompt or lyric-based generation | Quickly reveals if the concept has musical potential |

| Comparing versions of the same idea | Regenerate and model choice | Helps identify stronger directions |

| Evaluating message against sound | Lyrics-to-music workflow | Useful for theme-driven songwriting |

| Inspecting arrangement components | Vocal remover and stem splitting | Supports clearer analysis of what works |

| Developing incomplete ideas | Add tracks and song extension | Turns fragments into better test material |

What Feels Useful And What Still Has Limits

AISong looks useful because it reduces the time between hypothesis and evidence. Instead of debating endlessly whether a song idea might work, users can hear it and respond to what is actually there. That alone can make the creative process more disciplined.

Still, the tool should be approached with realistic expectations. AI-generated music remains dependent on input quality, prompt specificity, and willingness to iterate. A result that feels generic may not mean the concept is weak; it may mean the instruction was too broad. Likewise, a result that feels close but not quite right may still require several rounds of adjustment.

Where It Seems Especially Effective

The platform seems especially effective for early-stage validation, directional testing, and fast concept comparison. Those are areas where speed and variety matter more than total precision.

Where Human Judgment Still Leads

Taste remains central. A creator still has to decide what feels memorable, emotionally consistent, and worth refining. The platform can generate options, but it cannot decide which version best represents the intent behind the song.

Why This Changes The Role Of The Creator

What tools like AISong really change is not the existence of creativity, but its timing. They let creators react earlier. Instead of spending hours building a draft before hearing whether it works, users can hear candidates quickly and spend their energy choosing, revising, and guiding.

That can be a meaningful improvement. Many ideas fail not because they are bad, but because they remain untested for too long. A fast validation tool keeps creative momentum alive. In that sense, AISong is not only a music generator. It is a decision-making aid for people who want to know, sooner rather than later, whether a song deserves to keep growing.